The Dashboard for English Learners

A Dashboard for Learning English

Part Five in a series

This post, the fifth in a series, explains the "English Learners" indicator on the California School Dashboard. The Dashboard, which made its debut in March of 2017, provides significant information about the academic "performance" of students learning English. If you don't understand the basics about the Dashboard — especially how it blends "status" and "change" — you might want to read this blog series from the start.

What does it mean to be an English Learner?

Over 40% of California's students speak a language other than English at home. About a fifth of California's students are categorized as "English Learners," which means that they aren't fully fluent in English yet. As described in Lesson 6.3, an important role of California's school system is to ensure that each student progresses to fluency in English regardless of their starting point. California's school finance system provides funds to school districts and charter schools specifically with this educational need in mind.

In 2018 the state of California adopted a new set of tests to gauge student proficiency in English, collectively known as "the ELPAC." (Always is preceded with "the", the acronym stands for English Language Proficiency Assessments for California). After an initial assessment, students learning English are tested annually to evaluate their progress. When a student scores high enough, teachers and parents may reclassify him or her as fluent, after which the test is no longer administered. The ELPAC replaced an older test, the CELDT, in 2018

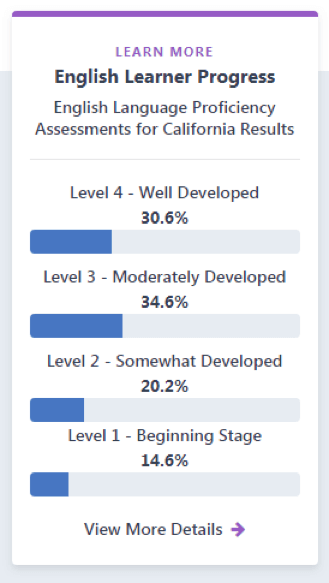

For now, the ELPAC is still in transition, and the dashboard only summarizes results in broad terms. English fluency "status" scores are grouped into four stages of proficiency, listed in the image to the right. At the time that multiple years of ELPAC results become available, the dashboard will begin calculating a change measure that evaluates school and district progress in support of students learning English.

Is my school good at teaching English Learners?

You cannot tell whether a school is good at teaching English Learners just by averaging ELPAC scores (or levels of proficiency) in a single year. The mix of students in any school can change significantly from year to year based on immigration patterns, economic factors or sheer chance. The definition of a great school for English Learners (ELs) is one that quickly advances the English language skills of each individual student from one year to the next, regardless of where they begin. Individually matched data like this (known as "longitudinal" data) is essential to interpreting patterns of success.

Even when longitudinal test score data becomes available, it will take some time for California to develop meaningful performance measures for the English Learner Dashboard; in 2019 the State Board expects to define threshold scores, a necessary early step in the process.

How does my school compare?

At this point you might be wondering how your school measures up when it comes to educating English Learners. When you visit the Dashboard and look up your school you won't get a clear answer. The Dashboard for English Learners is a work in progress, and it may take awhile for the State Board to get it figured out. The root cause of this delay is the state's decision to change from an old test (CELDT) to a new one (ELPAC). In a few years this transition will be forgotten, and we will edit this paragraph out of this post. In the meantime, the transition is actually a great example of the practical challenges of change.

Context: Ed100 Lesson 9.7

Part 1: Overview

Part 2: The Indicators

Part 3: Performance Colors

Part 4: Math and English

Part 5: English Learners

Part 6: Attendance and Absenteeism

Part 7: Suspensions

Part 8: Graduation

Part 9: College and Career Success

Part 10: "Local" Indicators for School Districts

Tags on this post

Dashboard English learnersAll Tags

A-G requirements Absences Accountability Accreditation Achievement gap Administrators After school Algebra API Arts Assessment At-risk students Attendance Beacon links Bilingual education Bonds Brain Brown Act Budgets Bullying Burbank Business Career Carol Dweck Categorical funds Catholic schools Certification CHAMP Change Character Education Chart Charter schools Civics Class size CMOs Collective bargaining College Common core Community schools Contest Continuous Improvement Cost of education Counselors Creativity Crossword CSBA CTA Dashboard Data Dialogue District boundaries Districts Diversity Drawing DREAM Act Dyslexia EACH Early childhood Economic growth EdPrezi EdSource EdTech Education foundations Effort Election English learners Equity ESSA Ethnic studies Ethnic studies Evaluation rubric Expanded Learning Facilities Fake News Federal Federal policy Funding Gifted Grade inflation Graduation rates Grit Health Help Wanted History Home schools Homeless students Homework Hours of opportunity Humanities Independence Day Indignation Infrastructure Initiatives International Jargon Khan Academy Kindergarten LCAP LCFF Leaderboard Leadership Learning Litigation Lobbyists Local control Local funding Local governance Lottery Magnet schools Map Math Media Mental Health Mindfulness Mindset Motivation Myth Myths NAEP National comparisons NCLB Nutrition Pandemic Parcel taxes Parent Engagement Parent Leader Guide Parents peanut butter Pedagogy Pensions personalized Philanthropy PISA Planning Policy Politics population Poverty Preschool Prezi Private schools Prize Project-based learning Prop 13 Prop 98 Property taxes PTA Purpose of education puzzle Quality Race Rating Schools Reading Recruiting teachers Reform Religious education Religious schools Research Retaining teachers Rigor Rubrics School board School choice School Climate School Closures Science Serrano vs Priest Sex Ed Site Map Sleep Social-emotional learning Song Special ed Spending SPSA Standardized tests Standards Strike STRS Student motivation Student voice Success Suicide Summer Superintendent Suspensions Talent Taxes Teacher pay Teacher shortage Teachers Technology Technology in education Template Test scores Tests Time in school Time on task Trump Undocumented Unions Universal education Vaccination Values Vaping Video Volunteering Volunteers Vote Vouchers Winners Year in ReviewSharing is caring!

Password Reset

Search all lesson and blog content here.

Login with Email

We will send your Login Link to your email

address. Click on the link and you will be

logged into Ed100. No more passwords to

remember!

Questions & Comments

To comment or reply, please sign in .

Sonya Hendren September 1, 2018 at 9:58 pm

Jeff Camp January 15, 2019 at 9:49 pm